5 min read

RAG-ready search on Scalingo: k-NN plugin now available for OpenSearch®

Introducing RAG

We're excited to announce that Scalingo for OpenSearch® now includes the k-NN feature, bringing full support for vector-based search. This marks an important step forward in our commitment to making powerful AI and semantic search capabilities accessible, secure, and easy to use, all on a fully managed platform hosted in France.

Why vector search matters

Traditional search engines rely on keywords and exact matches. Vector search changes the game by allowing you to search by meaning, not just text. By representing content and queries as numerical vectors, it's now possible to find the most relevant results even if they don't contain the exact words your users typed.

This opens up a wide range of use cases:

Semantic search for internal tools or knowledge bases

Intelligent document retrieval

AI assistants powered by Retrieval-Augmented Generation (RAG)

Search experiences that feel closer to how people actually think and ask questions

The k-NN plugin explained

The k-NN plugin (short for k-nearest neighbors) is what makes this possible. It allows OpenSearch® to store and search vector embeddings (meaning numerical representations of content) created by models like OpenAI’s all-MiniLM, or Hugging Face’s text-embedding-3-small.

With it, OpenSearch® can return results based on semantic similarity, not just string matching.

It's fast, scalable, and well suited for Generative Artificial Intelligence (GenAI) applications where relevance and context really matter.

What this means on Scalingo

By embedding the k-NN plugin, OpenSearch® for Scalingo now becomes a vector database, in addition to its already powerful search and observability features.

That means you can now:

Store and query vector embeddings

Combine structured search with semantic search

Power GenAI features like chatbots, document assistants or knowledge interfaces

All this while benefiting from what Scalingo already offers:

Fully managed infrastructure

Hosting in France

Seamless integration with your apps

Transparent per-minute pricing

Example : Leverage your internal knowledge base with AI-powered search

Your team likely has a large collection of internal documents: technical specs, onboarding guides, process docs, and meeting notes. Traditionally, finding the right information means searching by keywords, and often scrolling through outdated or loosely relevant results. If, like us, you use Notion as your internal wiki and documentation hub, you understand the struggle…

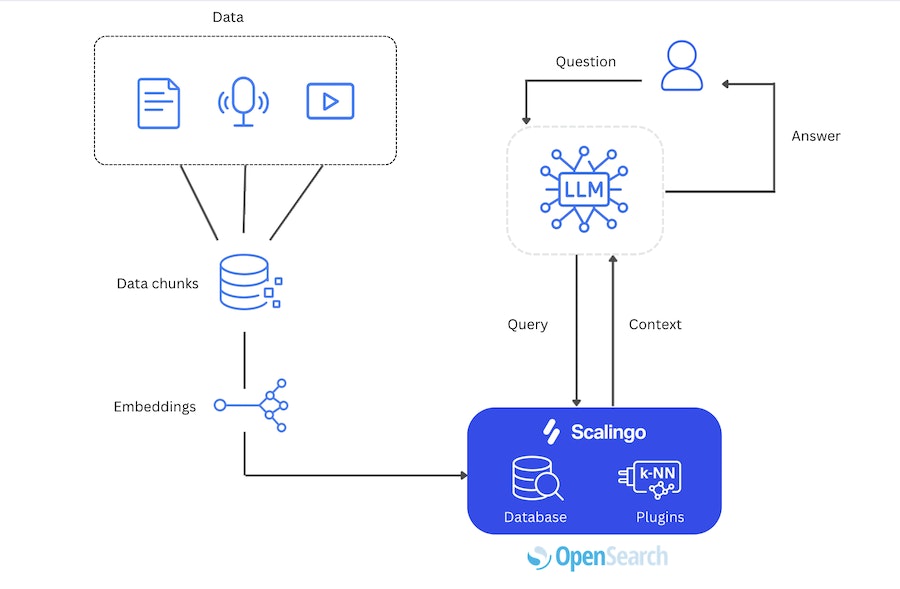

With vector-based search and Retrieval-Augmented Generation (RAG), you can take your searching to the next level. How? Leverage the open source ecosystem to generate and index your vectors, and use OpenSearch® to power your semantic search in four steps :

Document ingesting : begin by preparing your documents (any kind of data you wish to embed) for semantic search. This typically involves chunking the content and generating vector embeddings using a model such as

all-MiniLMby OpenAI ortext-embedding-3-smallfrom Hugging Face. Tools like LangChain, LlamaIndex, or a custom script can be used to orchestrate this process.Vector indexing : the generated embeddings must then be sent to an OpenSearch® instance hosted on Scalingo. The k-NN plugin within OpenSearch® will store them in a dedicated vector index, optimized for high-performance nearest neighbor searches.

Contextual querying : when a user query is submitted via a large language model (LLM) such as ChatGPT or Mistral AI, it is also converted into a query embedding and sent to OpenSearch using vector similarity search techniques.

Response generation: The retrieved documents are provided as "context" to a large language model (LLM). The model then generates a grounded, accurate response based on the indexed content.

The result? An AI assistant that rarely hallucinates, stays up to date with your internal knowledge, and can answer user questions in natural language, all powered by OpenSearch, right on Scalingo.

Get started today

Vector-based search is available right now to all OpenSearch users on Scalingo. Whether you're building a smarter internal search tool or exploring AI-powered assistants, this feature unlocks a new range of possibilities.

To get started, check out our documentation on OpenSearch extensions, or reach out to our team if you need any help.

Jennifer Taylor

At Scalingo, Jennifer leads growth and marketing initiatives, helping shape the company’s voice in the fast-evolving PaaS and cloud ecosystem. She loves translating complex cloud concepts into clear, engaging insights.

Stay Updated

Get articles and platform updates in your inbox.

Ready to Deploy with Confidence?

Experience zero-downtime deployments, intelligent auto-scaling, and fully managed infrastructure. Start deploying your applications on Scalingo today.

No credit card required • Deploy in minutes • Cancel anytime