In this article you'll know Tobi Stockinger, who saw his MongoDB database grow from 0 to 50GB in 3 days, and you’ll learn how he handled the load with his NodeJS application.

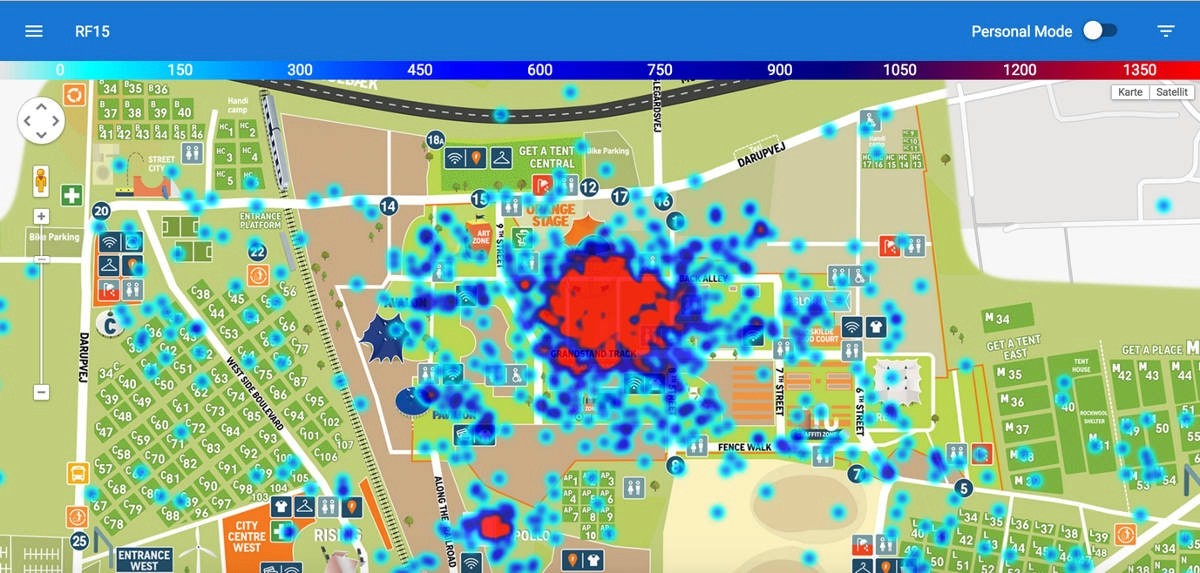

Figure 1 – Screenshot from the web-app. Crowd distribution (capped to 1500 users) during the Disclosure concert at the main stage (the Orange Stage).

Who are you?

My name is Tobi Stockinger and I’m a research and teaching assistant at the University of Munich (LMU). I'm happy to share my story with Scalingo, after a project of mine ended up in a stress test for their system, that they passed successfully.

I have a degree in media informatics and my PhD research revolves around usable security and privacy. To increase the validity of research data, I try to find and establish new ways to conduct field studies. For that, I occasionally build prototypes that are quite close to actual products and make them accessible to the public. The project I was deploying on Scalingo was a NodeJS application which I built from scratch both on the back- and the front-end.

What is your company/lab doing?

The lab is doing various things, but this project was a cooperation between the University of Munich, a company called Magniware and the Roskilde Festival. IBM Denmark and a team at the Copenhagen Business School would also analyze the data we collect.

Can you describe the project you put on Scalingo?

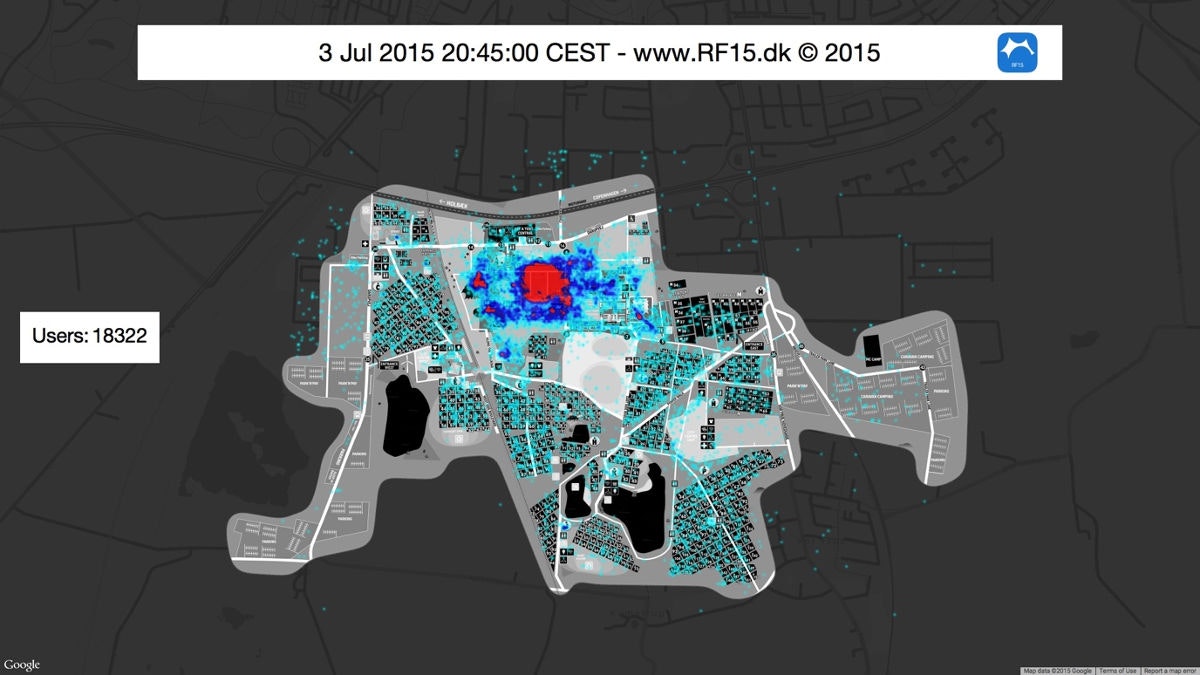

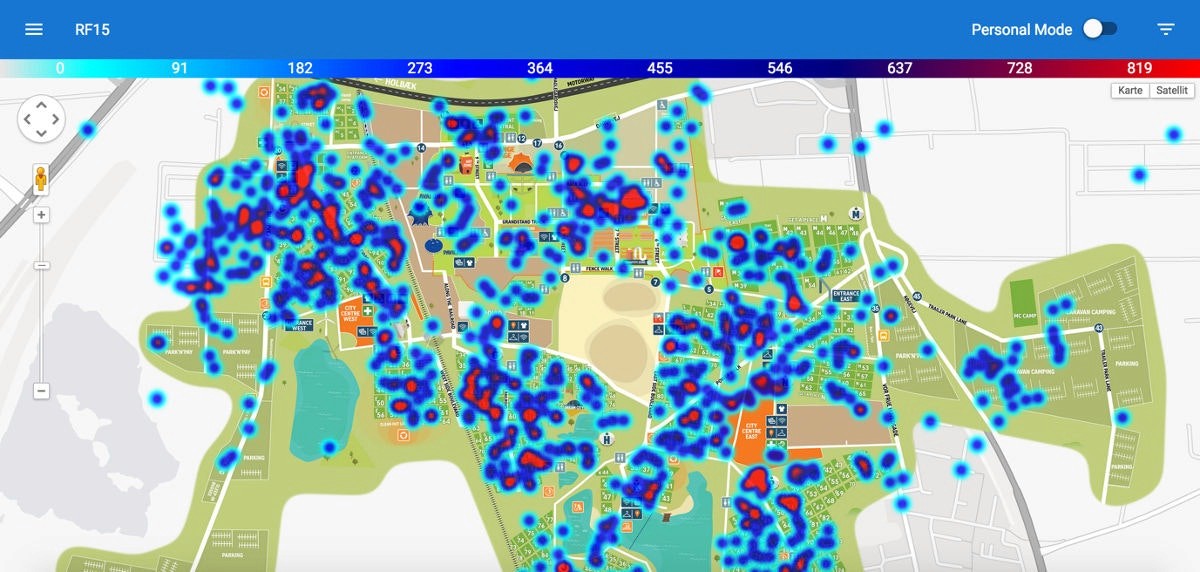

Our goal was to provide insights about the crowd density at the Roskilde Festival 2015 – where are people at a given time? What concerts do they attend? Are there any bottle necks whenever people walk from the camping area to the festival stages? To answer these questions, we leveraged the official festival iOS app that usually has more than 60.000 downloads each year. Once users install or upgrade the app, we ask them if it’s okay to collect anonymous location data during the one week festival. If they agree, the location updates take place every 15 minutes. In case there are other location fixes in between (e.g. because another application requested it), we logged them as well. Ideally, we received a maximum of 150 location updates per hour per user – that meant many small data points in a very short timeframe. I was in charge of collecting, storing and visualizing the data through a web app. The police and the festival visitors could access a (near) real-time visualization(rf15.dk, link inactive) and identify the most crowded areas. We initially wanted to offer the ability to go back in time and look at a particular timeframe, but had to cancel these efforts after the servers sustained too much load.

Figure 2 - Paul McCartney playing on the main stage. More than 18.000 users within a 15 minute timeframe.

How did it go?

In the end it went well – also thanks to Scalingo. We could store most location data that we meant to store. However, we did not anticipate the amount of traffic and how fast the database would grow. Beforehand, we did not think about how much trouble this would cause us.

Some metrics of what happened during the week

When the festival app was released to the public, the number of clients naturally grew quickly. But they would stop sending data, after first contacting the server. The smartphones then would start sending data when the festival was opened. By that time about 15.000 clients were alive and we quickly found out, that only one container did not work out, because we lost too many requests. That was bad! In order to make our NodeJS app compatible with the critical horizontal scaling , i.e. more containers , I needed to hot-fix part of the back-end, always at risk to lose data. Still, that worked.

However, now we drastically increased the database operations, because user sessions were now stored there. Handling this and all the other inserts for the location data slowed down queries a lot. So, we had to work on that again. I had to remotely tell all 35000 clients who were contacting us by that time to stop sending data. That meant risking losing them for eternity. When the traffic was down to a minimum during the night I created new database indexes that would handle queries more efficiently.

Also, when the front-end did not show anything anymore, I had to prevent heavy database aggregations that were triggered when people interacted with the front-end of the web app. For this reason, I wrote a tiny daemon that aggregated the data in set intervals and cache it into the database – I traded real-time functionality in favor of performance that way. All this happened while we were live and did not have a second chance to do the project – when do you have a festival with more than 130.000 people to collect valuable data?

We had about 46.000 clients in total during 3 days sending data every 15 seconds while we scaled up to 10 XL containers relying on an 8G MongoDB database.

The final three days were then mostly an easy cruise. We had about 46.000 clients in total. On Scalingo, we scaled up to 10 XL containers relying on an 8G MongoDB. After the festival we took a deep breath and exported 60GB of data to our own (smaller scale) servers and scaled down the app.

What did you learn?

The most important thing that I personally learned is to plan ahead and to always have a backup plan. Consequently, I realized the importance of being able to scale out an app instead of just scaling up. Had we used our own machine, it probably would not have been able to handle all the requests. So we were happy to have Scalingo dealing with that and contribute to the project’s success

Figure 3 - The morning hours: less location updates because people turn off their phones to save battery.

What’s next? Bigger scale? More traffic next time?

We hope to provide our crowd insights and real time visualization to other festivals or events as well. We have learned so much and are prepared for any scale now (it hardly gets bigger than Roskilde). We will make sure to reduce traffic such that we filter the data more aggressively before it gets transferred to the server. And of course, we will scale properly from “day one”.

What do you think about Scalingo’s platform, support, etc?

We were really happy to have Scalingo for the project, but it is super comfortable to use even in less critical situations. I think it is super easy to deploy your apps there, a simple “git push” is just genius! The CLI is awesome, it has everything that I need. But the most convincing argument for Scalingo is their support. There are real people who are eager to help you by day and by night. When our web app was drowning in requests, we received feedback and suggestions in the middle of the night. Thanks a lot!

Conclusion

And now some maths after the event:

10 x XL containers during 5 days : 10×0.08×24x5 = 96€

1 x 8GB MongoDB (incl/ 40GB disk storage) database during 5 days : 115.20×5/30 = 19.20€

Extra database storage (20GB) during 5 days : 2x20x5/30 = 6.66€

Total cost to handle the load of 46000 clients sending data every 15 seconds on a NodeJS/MongoDB application and store the data during the 5-day festival : 121.86€.

See our pricing page to get all the details : https://scalingo.com/pricing.

And now it’s your turn to be successful! Create an account now and see your app live on Scalingo in the next 2 minutes.